I Tested Gemma 4 for Local Agentic AI: Architecture, Benchmarks, Prompting, and Deployment Lessons

Gemma 4: My Researcher's Field Notes on Open Weights, Local Agents, and What Actually Breaks in Deployment

Cold Start: Why I Spent Weeks Testing Gemma 4 Instead of Just Tweeting About It

I will be honest: when Gemma 4 dropped, I expected another "good open model" launch cycle. A week of hype, benchmark screenshots, and then silence. But once I started testing it in real workflows, I realized this release is not just about model quality. It is about control.

As an AI student who spends more time experimenting than sleeping, I have been stuck in the same trade-off everyone else has been stuck in:

- Use cloud frontier models and give up data locality.

- Use local models and give up serious reasoning depth, stable tool use, and long-context reliability.

Gemma 4 is one of the first open-weight families where I could feel that trade-off shifting in practice. It is built on research aligned with Gemini-class architecture, but shipped with Apache 2.0 licensing, which changes the business and technical game immediately.1 You are not boxed in by MAU limits, unclear commercialization clauses, or restrictive acceptable-use gates tied to an external API account.1

That licensing part sounds boring until you are building actual products. Then it becomes everything.

What Changed: From Cloud-Dependent Chat to Local Agentic Loops

The biggest shift I observed is this: Gemma 4 is designed for stateful, tool-using agent workflows, not only single-turn chat.2,5 In plain words, it is built for systems that can plan, call tools, inspect outputs, and iterate.

That matters because most so-called "AI assistants" are still stateless text generators in disguise.4 They output tokens, not execution loops. Once you start building local agents for coding, research automation, or multimodal tasks, that distinction becomes brutally obvious.

I kept testing this with a simple internal rule:

- If the model can reason but cannot hold a clean tool loop, it is not agent-ready.

- If it can hold a loop but melts under context pressure, it is not production-ready.

- If it needs cloud exfiltration for every serious task, it is not privacy-ready.

Gemma 4 does not magically solve every engineering problem, but it is one of the first open families that is explicitly trying to solve all three at once.2,5

[!NOTE] Lab Log #1 - Week 1 observation: My first stable wins came when I stopped treating Gemma 4 like a chatbot and started treating it like an execution engine. The moment I forced clean tool boundaries and context hygiene, reliability jumped.

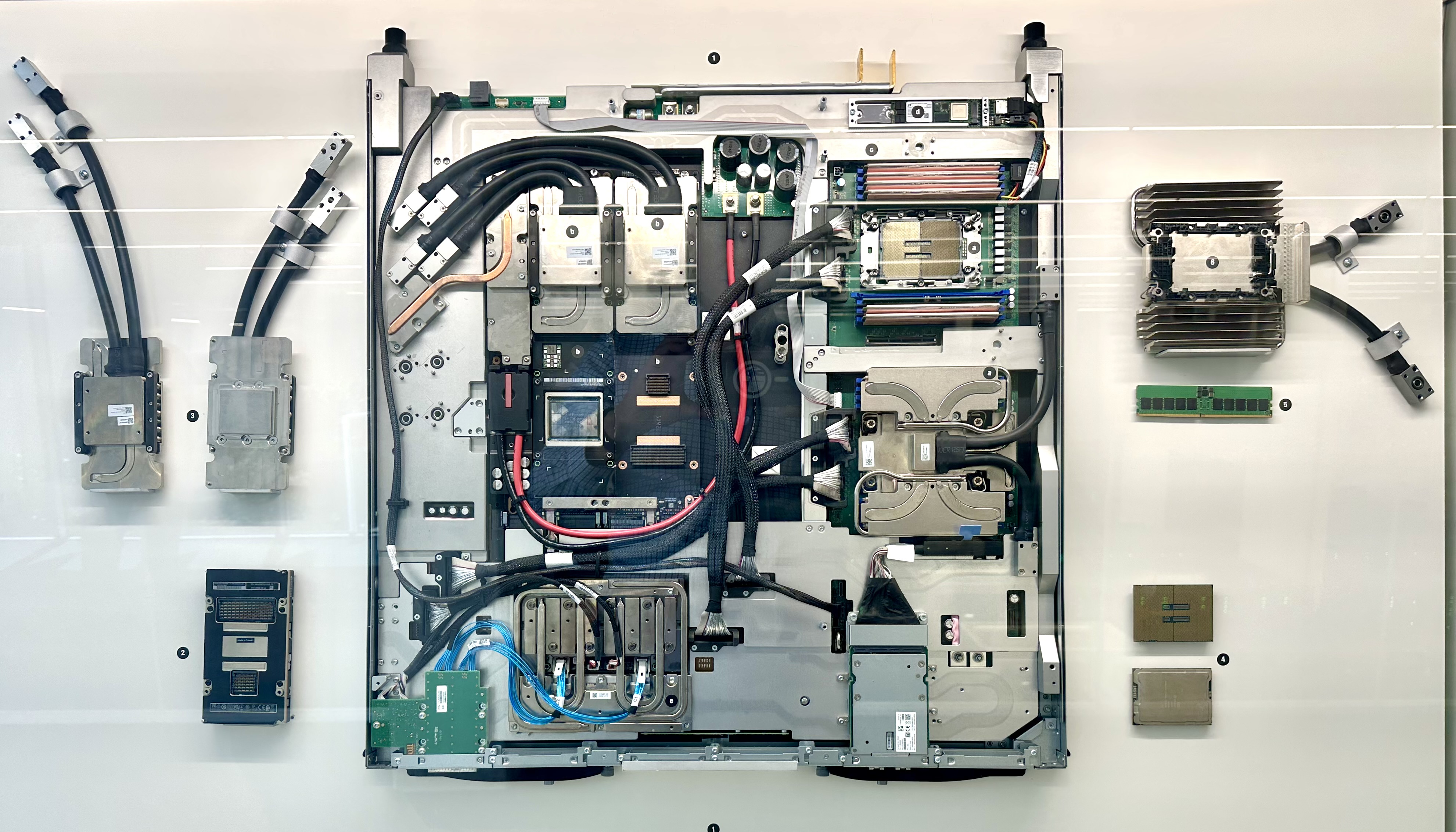

Architecture Pivot: Not Bigger-For-The-Sake-of-Bigger, But Hardware-Aligned Design

Older open models often followed a homogeneous scaling logic: increase parameters, increase layers, increase hidden dimensions, repeat. Gemma 4 feels different. It is not one monolithic model; it is a hardware-aware portfolio with purpose-built topologies.3,6

It also moves beyond simple text/text-image assumptions, supporting broader multimodal paths (text, image, and for edge variants, audio/video support) with long context windows reaching 256K in larger variants.6,8

Model Variants I Tracked During Testing

| Model Variant | Active Parameters (Per Token) | Total Parameters | Architecture Type | Context Window | Supported Modalities | Target Hardware Environment |

|---|---|---|---|---|---|---|

| E2B | 2.3B | 5.1B | Dense + PLE | 128K | Text, Image, Audio, Video | Mobile, IoT, Raspberry Pi, Jetson |

| E4B | 4.5B | 8.0B | Dense + PLE | 128K | Text, Image, Audio, Video | Mobile, Edge Devices |

| 26B-A4B | 3.8B | 25.2B | MoE (128 Experts) | 256K | Text, Image, Video | Local Workstations, Consumer GPUs |

| 31B | 30.7B | 30.7B | Dense | 256K | Text, Image, Video | Enterprise Servers, Cloud |

One under-discussed practical feature: vision token budget control. You can choose image token budgets (70, 140, 280, 560, 1120) and explicitly trade spatial fidelity vs latency.6 That is the kind of control you appreciate only after watching multimodal requests explode your token budget in production.

Quick Scan: Why this architecture shift matters

- It aligns model topology to hardware constraints instead of brute-force parameter scaling.

- It gives practical knobs (vision token budgets, active parameter routing) that map to real deployment costs.

- It reduces the gap between "open model demo" and "production local agent loop."

The Edge Story: Why PLE in E2B/E4B Is More Important Than It Looks

The "E" in E2B/E4B means effective parameters.2 That wording sounds like branding until you inspect the mechanics.

Classic transformer embedding paths map token IDs once, then push that representation through the full residual stream.6 Gemma 4 edge variants add Per-Layer Embeddings (PLE), where embedding output is bifurcated:

- standard token tensor:

[batch, seq, hidden_size] - per-layer signal tensor:

[batch, seq, num_hidden_layers, hidden_size_per_layer_input]10

In E2B, with 35 hidden layers, that second path is shaped like [batch, seq, 35, 256].8 Each layer gets a dedicated slice and injects it as local residual guidance.6

When I first read that, I thought, "Interesting paper idea." When I mapped it to deployment constraints, it clicked:

- You still store 5.1B total parameters in memory for E2B.11

- But per-token compute only activates 2.3B effective parameters.11

So representational capacity and inference cost are partially decoupled.7,11 For edge devices, that is huge. It means the model can act "larger" semantically while behaving "smaller" computationally.

Another subtle detail I appreciated: in multimodal positions where text placeholders get overwritten by continuous embeddings, PLE uses pad-token behavior for those locations to avoid contaminating visual/audio signal flow.6 This is exactly the kind of engineering detail that prevents weird multimodal instability.

The Workstation Story: Why 26B-A4B MoE Feels Like a Cheat Code (If You Have VRAM)

If you care about local-first coding agents, the 26B-A4B model is probably the most strategically interesting in the lineup.

It uses a granular Mixture-of-Experts design (128 experts, 8 selected + 1 shared expert active per token).8,12 Compared to older coarse MoE routing patterns, this gives tighter specialization while preserving a stable shared transformation path.8,12

The number that matters operationally:

- total resident parameters: 25.2B

- active per-token compute: 3.8B3

That means speed characteristics can resemble much smaller dense models, while quality trends much closer to large dense behavior.12 On reported evaluations, the gap to the 31B dense variant is small (LMArena estimate ~1441 vs ~1452) relative to compute profile.6

But here is the catch that people skip in viral posts: memory planning is still brutal. You still need to hold the full expert pool in memory (for fp16-style storage assumptions, large VRAM footprints are expected).14 If your hardware is not ready, active parameter efficiency does not save you.

Long Context in Practice: Alternating Attention, Dual RoPE, and Shared KV Caches

Everyone loves saying "256K context" in marketing decks. Very few discuss what happens to memory bandwidth when you actually use it.

Gemma 4 tackles this with a stack of techniques:

- Alternating attention (local sliding + global full-context layers)3

- Dual RoPE strategy (standard RoPE for local, p-RoPE interpolation for global)3,8

- Shared KV cache behavior in upper decoder layers6

Local window sizes differ by variant class (e.g., 512 vs 1024 token windows).6 The result is a model that can process local syntax efficiently while still preserving long-range semantic retrieval.3

I especially like the Shared KV design direction: upper layers can reuse KV projections from the last non-shared layer of the same attention type rather than recomputing every layer independently.6 In long-session systems, that directly translates into lower memory pressure and better serving economics.

If you are building long-context RAG, codebase analysis tools, or multi-file autonomous agents, this architecture is not a nice-to-have detail. It is the difference between stable throughput and random OOM incidents.

The Agentic Leap: From Prompting to Goal-Driven Execution Loops

I tested Gemma 4 through the lens of agentic behavior, not just answer quality.

A chatbot predicts a response and exits.4 An agent takes a goal, decomposes tasks, calls tools, checks outputs, and loops until done.4

Gemma 4 is explicitly optimized toward the second mode.5 That includes local mobile paths (AICore/ML Kit routes) and desktop coding assistance paths (Android Studio Agent Mode with local providers).5

[!TIP] Lab Log #2 - Prompting habit that improved results: I now write prompts as workflow contracts, not questions. Goal, tool scope, stop criteria, and failure behavior go in up front. This reduced random loop drift significantly.

On-Device Path (Android AICore + ML Kit)

For Android, the practical implication is straightforward: developers can ship on-device intelligence where data stays local, latency drops, and cloud dependency weakens.5 Google reports significant speed and energy gains for supported hardware classes (e.g., up to ~4x speedups and lower battery draw under specific conditions).5

The model-choice strategy is also clear:

- E2B when speed and responsiveness dominate

- E4B when deeper reasoning/logic quality is worth extra compute5

And yes, multimodal input is not theoretical. You can pipeline OCR + visual context into prompt flows using ML Kit primitives and Gemma 4 prompt APIs.5

Local Coding Agents (Android Studio + Local Provider)

What got my attention most is local agentic coding in Android Studio tied to Gemma 4 MoE paths.5

Why this matters:

- Enterprises with compliance constraints can keep source code local.

- Developers still get autonomous loop behavior (edit, build, inspect logs, patch, retry).

Operationally, setup still requires real hardware (minimum memory/storage expectations are non-trivial).5 This is not "runs everywhere" magic. But it is a major move toward local AI coding assistants that do not require shipping your repo to a third-party API every few minutes.

Prompt Engineering Reality: If You Ignore Token Syntax, You Will Break the Agent Loop

I cannot stress this enough: with Gemma 4, prompt formatting is part of system design, not cosmetic prompt engineering.

The tokenizer uses strict control token grammar for turns, channels, modalities, and tool lifecycle.15 If you drift from this format, quality drops fast and loop behavior can collapse (including repetition pathologies observed by users).16

Core Turn and Multimodal Format

Turn boundaries:

<|turn|>starts a turn<turn|>ends it15

Media placeholders:

<|image|><|audio|>15

Example format:

<|turn|>user Describe the details in this image: <|image|> and transcribe the audio here: <|audio|><turn|>

<|turn|>model

This is not optional ceremony. The model expects these boundaries.

Thinking Mode: Powerful, Expensive, and Easy to Mismanage

Thinking mode is activated with <|think|> in system instruction context.15 The model then emits internal reasoning in thought channels bounded by:

<|channel|><channel|>15

Example:

<|turn|>system You are an expert code analyst. <|think|><turn|>

<|turn|>user Why is this function returning a null pointer?<turn|>

<|turn|>model <|channel|>thought The variable is initialized inside the loop but accessed outside... <channel|> The error occurs because the variable scope is restricted to the inner loop block.<turn|>

In my experiments, the hard part was not enabling thought mode. It was managing its token cost over long sessions.

- Keeping every thought block causes context bloat and TTFT drag.15

- Stripping everything can make agents forget prior rationale and loop in circles.15

The practical middle-ground: summarize thought traces and keep compact rationale context.15 Also, instructing "think efficiently" or low-depth reasoning in system instructions can materially reduce compute overhead without disabling reasoning entirely.15

Function Calling and Stop-Sequences: Where Agent Systems Usually Fail

Gemma 4 exposes native tool lifecycle tokens.15 This is great, but only if your host runtime respects them correctly.

Core flow:

- define tools via

<|tool|> ... <tool|>in system context15 - halt generation when

<|tool_call|>appears15 - execute host function with protected parameters (including

<|"|>delimiters)15 - inject result via

<|tool_response|> ... <tool_response|>15

Canonical pattern:

<|turn|>model <|channel|>thought I need the active user count to calculate the server load. <channel|><|tool_call|>call:get_user_count{region:<|"|>us-west<|"|>}<tool_call|><|tool_response|>

Then host app appends:

<|turn|>model <|tool_call|>call:get_user_count{region:<|"|>us-west<|"|>}<tool_call|><|tool_response|>response:get_user_count{status:200, users:45000}<tool_response|>

Critical warning from implementation behavior: if thought text and tool call are in the same turn, stripping the thought segment can sever planning-to-argument mapping and degrade tool reliability.15

Ecosystem Reality Check: Great Momentum, But Quantization and Runtime Friction Are Real

Gemma 4 is well represented across the open stack (Hugging Face, vLLM, llama.cpp, TRL, Unsloth paths).2 But deployment is not one-click, especially once you touch larger checkpoints, long context, and multimodal workloads.

Quantization Is Not a Free Lunch

I saw an important pattern in available benchmark discussions: quantization outcomes are highly workload-dependent for Gemma 4.

For example, on high-throughput serving setups, AWQ INT8 can underperform badly during prefill compared to native bfloat16 paths due to dequantization overhead.14 Reported example: ~1066 t/s (bf16) vs ~430 t/s (INT8) at 2048-token prompt processing in one benchmark context.14

Meanwhile, AWQ INT4 can be excellent for short prompts and lower TTFT (reported ~247 ms at short prefill in the cited benchmark context).14

So the real lesson is not "INT8 bad" or "INT4 best." The lesson is:

- optimize quantization choice for your actual traffic shape (long prefill vs short-turn chat)

- benchmark on your own pipeline before hard commitments

[!WARNING] Lab Log #3 - Quantization trap I hit: I assumed INT8 would be the safe default for everything. For long prefill workloads, that assumption cost me latency. The fix was workload-specific profiling, not format loyalty.

KV Cache Economics Can Flip with Quantized Weights

As weight memory shrinks, available VRAM for KV cache can grow dramatically.14 In long-context use cases, that can matter more than raw kernel speed because keeping context fully resident in VRAM avoids painful swaps.

If your product is long-session agentic behavior, memory geometry decisions are product decisions.

Fine-Tuning Trap: Do Not Accidentally Remove the Model's Reasoning Habit

One issue I care about as a student: instruction-tuned Gemma 4 models are reasoning-biased.15 If you fine-tune with plain request-response data that lacks thought-structure mapping, you can degrade reasoning performance.

Mitigation pattern documented in guidance:

- inject empty thought-channel scaffolding in training formatting

(<|turn|>model <|channel|>thought <channel|>)15

That keeps alignment between training examples and the model's expected reasoning state machine.

ONNX Blockers: PLE Is a Real Compatibility Problem Right Now

If you planned to deploy E2B/E4B through current ONNX Runtime GenAI stacks, this is where the pain starts.

The runtime path historically assumes a single embedding flow, while Gemma 4 edge variants can emit bifurcated embedding streams because of PLE.10 Attempted workarounds can fail with shape inference and matmul dimension incompatibilities.10

Until runtime support catches up, practical alternatives include other inference frameworks and mobile-native paths where architecture support is explicit.5,6,10

What I Learned After Testing: Gemma 4 Is a Strategic Stack, Not Just a Model Launch

If you are building with modern AI trends like local AI agents, privacy-first AI, open-source LLM deployment, multimodal edge inference, and on-device coding copilots, Gemma 4 is one of the most important releases to understand this cycle.

My personal verdict, from researcher mode:

- Big win: hardware-aligned architecture (PLE + granular MoE + long-context efficiency) gives real deployment flexibility.3,6,12

- Big win: open licensing under Apache 2.0 gives product teams room to ship without legal anxiety.1

- Big win: native tooling semantics and thought channels make agent loops more realistic in local-first systems.5,15

- Real constraint: memory requirements and quantization trade-offs still decide who can deploy what.14

- Real constraint: runtime/tooling compatibility (especially ONNX for PLE variants) is not solved end-to-end yet.10

Build-Ready Checklist (for readers who want to test today)

- Pick the variant by hardware first, benchmark score second.

- Define prompt/tool grammar before scaling traffic.

- Profile prefill and decode separately for each quantization plan.

- Budget VRAM for both weights and KV cache, not just model size.

- Keep fallback runtime options if ONNX path is a requirement.

So no, this is not "the model that solves everything."

But yes, it is a serious milestone in the transition from cloud-first assistant UX to local-first autonomous systems.

And as someone who learns by breaking things, benchmarking things, and rebuilding things: this is exactly the kind of release that rewards hands-on builders.

If you are experimenting right now, do not just ask "Is Gemma 4 good?" Ask:

- Which variant matches my hardware and latency budget?

- How do I structure prompt/tool channels for stable loops?

- Which quantization profile matches my actual traffic pattern?

- How much context can I afford before UX degrades?

That is where the real engineering conversation starts.

Resources and Lab Trail

Works cited

- Gemma 4 available on Google Cloud, accessed April 18, 2026, https://cloud.google.com/blog/products/ai-machine-learning/gemma-4-available-on-google-cloud

- Gemma 4: Our most capable open models to date - Google Blog, accessed April 18, 2026, https://blog.google/innovation-and-ai/technology/developers-tools/gemma-4/

- What Is Google Gemma 4? Architecture, Benchmarks, and Why It Matters - WaveSpeed AI, accessed April 18, 2026, https://wavespeed.ai/blog/posts/what-is-google-gemma-4/

- Why The Google Gemma 4 AI Model Is A Turning Point For Local AI Workflows - Reddit, accessed April 18, 2026, https://www.reddit.com/r/AISEOInsider/comments/1sc6q0i/why_the_google_gemma_4_ai_model_is_a_turning/

- The new standard for local ... - Android Developers Blog: Gemma 4, accessed April 18, 2026, https://android-developers.googleblog.com/2026/04/gemma-4-new-standard-for-local-agentic-intelligence.html

- Welcome Gemma 4: Frontier multimodal intelligence on device - Hugging Face, accessed April 18, 2026, https://huggingface.co/blog/gemma4

- Gemma 4 model overview | Google AI for Developers, accessed April 18, 2026, https://ai.google.dev/gemma/docs/core

- Gemma 4 model card | Google AI for Developers, accessed April 18, 2026, https://ai.google.dev/gemma/docs/core/model_card_4

- Gemma 4: A Practical Guide for Developers - DEV Community, accessed April 18, 2026, https://dev.to/arshtechpro/gemma-4-a-practical-guide-for-developers-2co5

- Feature Request: Support for Google Gemma 4 model family (PLE architecture, variable head dims, KV cache sharing) · Issue #2062 · microsoft/onnxruntime-genai - GitHub, accessed April 18, 2026, https://github.com/microsoft/onnxruntime-genai/issues/2062

- Bringing AI Closer to the Edge and On-Device with Gemma 4 | NVIDIA Technical Blog, accessed April 18, 2026, https://developer.nvidia.com/blog/bringing-ai-closer-to-the-edge-and-on-device-with-gemma-4/

- What Is Gemma 4's Mixture of Experts Architecture? How 26B Parameters Run Like a 4B Model | MindStudio, accessed April 18, 2026, https://www.mindstudio.ai/blog/gemma-4-mixture-of-experts-architecture-explained

- What Is the Gemma 4 Mixture of Experts Architecture? How 26B Parameters Run Like 4B, accessed April 18, 2026, https://www.mindstudio.ai/blog/gemma-4-mixture-of-experts-architecture

- Gemma 4 Day-1 Inference on NVIDIA DGX Spark — Preliminary Benchmarks, accessed April 18, 2026, https://forums.developer.nvidia.com/t/gemma-4-day-1-inference-on-nvidia-dgx-spark-preliminary-benchmarks/365503

- Gemma 4 Prompt Formatting | Google AI for Developers, accessed April 18, 2026, https://ai.google.dev/gemma/docs/core/prompt-formatting-gemma4

- Gemma 4 format? : r/KoboldAI - Reddit, accessed April 18, 2026, https://www.reddit.com/r/KoboldAI/comments/1seqyr3/gemma_4_format/

- unsloth/gemma-4-26B-A4B-it-GGUF - Hugging Face, accessed April 18, 2026, https://huggingface.co/unsloth/gemma-4-26B-A4B-it-GGUF

- nvidia/Gemma-4-31B-IT-NVFP4 - Hugging Face, accessed April 18, 2026, https://huggingface.co/nvidia/Gemma-4-31B-IT-NVFP4